The Resilience Checklist: What to Ask Before the Next Outage

Before the deep-dive, the anchor artifact. Your vendor (Cobalt, Middesk, Heron, Ocrolus, Baselayer, or anyone else claiming live-ping) should be able to answer these cleanly. If they deflect, you have just learned something about their architecture.

• Per-state circuit breakers, independently scoped. When Oregon goes down, do California verifications continue to flow, or does the whole pipeline degrade? Global breakers are an architectural smell; per-state is the 2026 production standard. Ask your vendor to describe the scoping.

• Error classification distinguishing retryable from permanent. Network failures, 5xx responses, and 429 rate-limits are retryable. Auth failures and data errors are not. A vendor that retries everything amplifies outages; a vendor that retries nothing loses transient verifications. Ask what their classification logic looks like.

• Retry cap matched to idempotency-key retention window. Retries beyond the vendor's idempotency-key retention produce duplicate verifications (and duplicate charges). Typical retention is 24 hours to 7 days. Ask both numbers and confirm alignment.

• Dead-letter queue visible to integrators. Verifications that exhaust retries have to go somewhere that you can see and act on. A vendor-internal-only dead-letter is an audit-defense gap. Ask whether you can query the dead-letter from your own integration.

• Graceful-failure policy documented per verification class. Onboarding verifications, portfolio-monitoring sweeps, and regulatory-deadline verifications each need different failure behavior. Ask for the policy document.

• Runbook for common state-source outages. Oregon, Delaware, California, New Jersey each have distinct failure signatures. Ask what your vendor's ops team actually does when each goes down.

Shops that can answer these in a procurement call are production-ready. Shops that cannot are telling you something.

Why State Sources Fail, and What the Failures Cost You

State registry systems are government IT infrastructure. Maintained on government budgets, run on government procurement cycles. The failure modes are predictable and recurring.

Observed state-source uptime varies by state and time window. Published vendor data and industry observation put most state SOS systems in the 97 to 99.5 percent uptime band during business hours, with wider variance in non-business hours. Oregon and a handful of slower states drop lower during maintenance cycles. These are directional ranges, not SLA-grade numbers; pin down your own observed values against your vendor over a representative month rather than relying on the general figure.

The six dominant failure modes that affect your pipeline:

• Scheduled maintenance. State IT teams take registries offline on posted or unposted schedules. Business impact: predictable if you track the posted schedules; surprise outages if you do not.

• Unscheduled outages. Minutes to days. Business impact: verifications pending, onboarding UX degraded, ops fielding calls.

• Rate limiting (explicit 429 or implicit throttling). During peak hours on high-volume states (California, New York, Texas), 429 rates can run 1 to 3 percent of calls. Business impact: retries amplify the problem if not capped.

• Response-time degradation. Source is up but slow. Oregon's baseline 5-minute response can extend under load. Business impact: your async queue fills up, latency SLAs slip.

• Partial failures. Search endpoint works, document retrieval fails. Business impact: you get a verification but cannot produce the audit artifact.

• Schema changes. State updates response format without notice. Business impact: production verifications start returning null fields, downstream rules misfire silently.

Each failure mode requires a different retry strategy. A single retry-on-any-error loop produces the wrong behavior on most of them.

Retry Logic: What the Pipeline Should Actually Do

Retries happen at three layers in a verification pipeline. The layer you own is integrator-to-vendor, and it is where most production bugs live.

The canonical pattern for transient failures is exponential backoff with jitter. Retry after 1 second, then 2, then 4, then 8, with random offset added to prevent synchronized retry storms across concurrent requests.[1] Cap the total retry duration (typical: 5 to 10 attempts or 2 to 5 minutes elapsed). Verifications that exhaust the cap move to a dead-letter queue. The cap prevents single-verification failures from blocking pipeline capacity on a persistent outage.

import random

import time

def retry_with_backoff(operation, max_attempts=5, base_delay=1.0, max_delay=60.0):

for attempt in range(max_attempts):

try:

return operation()

except RetryableError:

if attempt == max_attempts - 1:

raise

delay = min(max_delay, base_delay * (2 ** attempt))

time.sleep(delay * (0.5 + random.random()))

except PermanentError:

raise # never retry permanent errorsThe single most important retry decision is error classification. Retry on network errors, 5xx responses, 429 rate-limits (honor Retry-After header), and vendor-documented transient errors. Do not retry on 4xx validation errors (except 429), auth failures, or data errors; those indicate a problem the retry cannot fix. The ambiguous middle (429 with unclear cause, 503 that may clear or persist) gets treated as retryable with retry cap so a stuck-open error does not consume resources indefinitely.

Circuit Breakers: The Layer Above Retries

Retry logic handles individual request failures. Circuit breakers handle systematic failures.[2] The three-state machine: Closed (normal, count failures in a sliding window), Open (threshold exceeded, requests fail fast), Half-open (cooldown expired, trial requests test recovery).

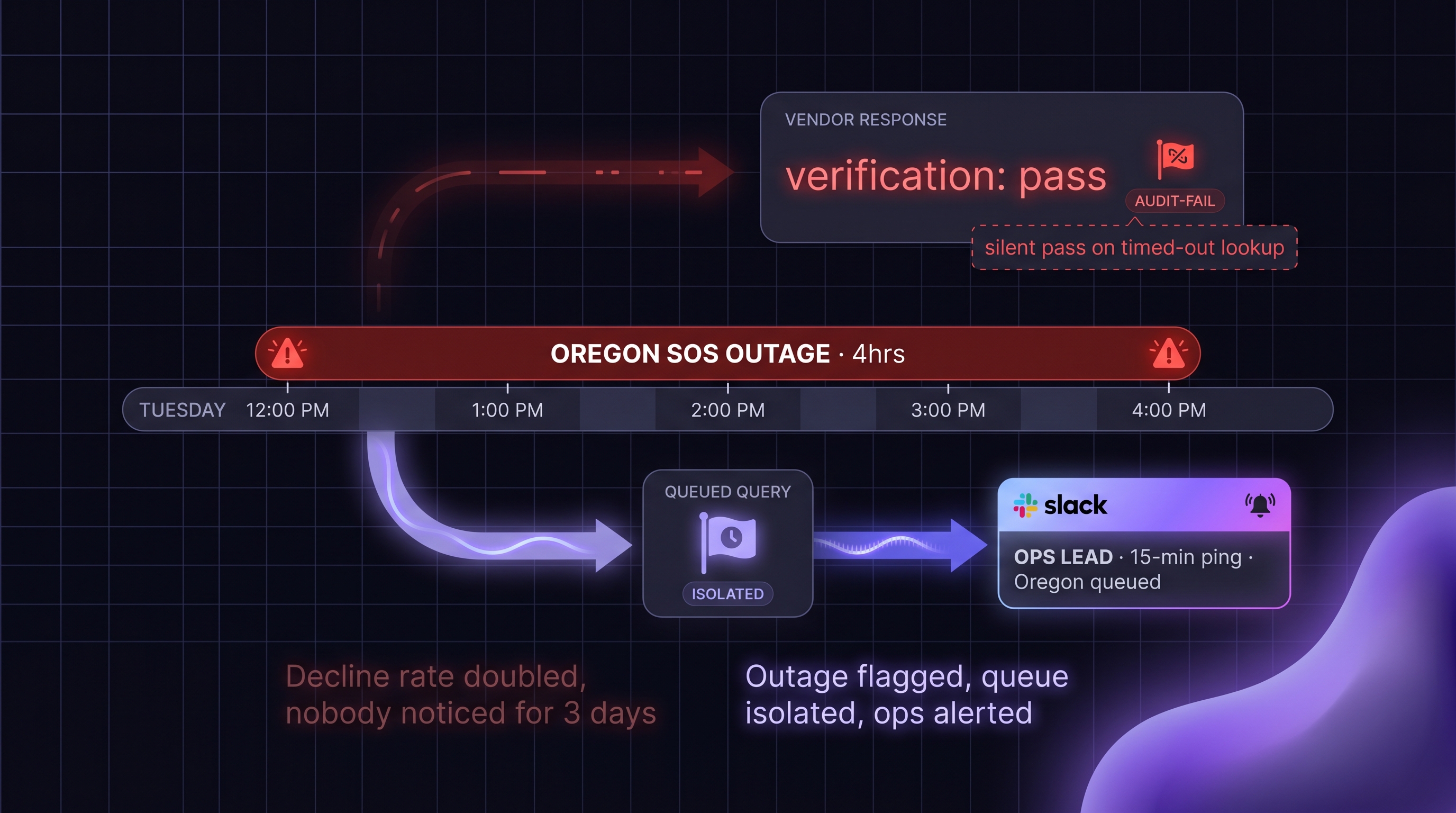

The architectural decision that matters most: scope the breaker per state source, not globally. Oregon being down should not affect California verifications. A global breaker that trips on any state outage makes the pipeline unavailable even when only one state is affected. This is the single biggest resilience differentiator between production-ready KYB vendors and prototype-grade ones, and it is the first question to ask a vendor claiming live-ping architecture.

Failure thresholds should be tuned to each state's baseline reliability. A state source that normally returns 99.9 percent success rate should trip the breaker at a much lower failure rate than one that baselines at 95 percent. Cooldowns are typically 30 seconds to a few minutes, with adaptive cooldowns that extend if the source continues to fail in the half-open state.

Circuit breakers affect what your applicants see during an outage. When the Oregon circuit trips, your pipeline immediately stops retrying Oregon and routes Oregon verifications to the dead-letter queue or graceful-failure path. Oregon applicants see a "verification in progress, state source temporarily unavailable" UX state, rather than a 5-minute spinner that eventually times out. Non-Oregon applicants see normal flow. Your ops team gets an alert. The difference from a no-breaker pipeline is observable in deal flow within the first hour of an outage.

Graceful Failure: Four Options When Retries Are Exhausted

Retries and circuit breakers eventually hit a wall. The pipeline needs a policy for what happens then.

• Queue and retry later. For non-urgent verifications (portfolio monitoring, bulk re-verification). Schedule retry every few minutes to every few hours depending on urgency.

• Decline with explicit documentation. For regulatory-deadline verifications where the clock is running and the alternative is missing the deadline. Return to the upstream with reason code; upstream makes the policy decision.

• Fallback to cached data with flag. For onboarding-urgent cases where the applicant is waiting. Return the most recent cached verification with an explicit "cached, source unavailable" marker. Upstream decides whether to accept.

• Manual escalation. For ambiguous or high-value cases. Route to a human reviewer with full context (state source status, cached data age, applicant context).

Which option to use where is a policy decision, not a vendor decision. For onboarding-critical verifications with the applicant waiting, cached-with-flag or manual-escalation are typical. For portfolio monitoring, queue-and-retry is the default. For BOI 30-day correction windows on foreign reporting companies, decline-with-documentation may be required if the verification cannot be completed by the regulatory deadline.

Whatever option the pipeline chooses, the record must capture the retry attempts that occurred, the circuit-breaker state at the time, the final failure mode, and the policy decision applied. An examiner asking "why did this applicant proceed on cached data" needs to see the evidence that live-ping was attempted and failed.

Idempotency: The Foundation That Makes Retries Safe

Retries only work safely if the operation is idempotent.[3] Retrying a non-idempotent operation produces duplicate effects: duplicate verifications in the audit log, duplicate charges against the vendor API, duplicate records in downstream systems.

The client generates a unique key per logical verification and passes it on every related API call. The vendor stores the key on first receipt and returns the same response for subsequent calls with the same key within the retention window.

curl --location 'https://apigateway.cobaltintelligence.com/v1/search' \

--header 'x-api-key: YOUR_API_KEY' \

--header 'x-idempotency-key: app_20260422_oregon_001' \

--data-urlencode 'searchQuery=Acme Industries LLC' \

--data-urlencode 'state=oregon'Match your retry cap to the vendor's idempotency-key retention window (typically 24 hours to 7 days). Retries after retention expires may execute twice, which at 1M monthly verification volume can mean thousands of dollars in duplicate charges over a sustained outage, plus audit-log duplication that complicates exam defense.

Downstream systems (your audit log, underwriting engine, portfolio monitor) also need to handle duplicate callback delivery. Track incoming callbacks by correlation ID; deduplicate if the same ID arrives twice. True exactly-once delivery is a distributed-systems unicorn. At-least-once delivery plus idempotent processing is exactly-once-effective.

What the Competitive Landscape Actually Offers

Retry logic, circuit breakers, and idempotency keys are table stakes for any 2026 live-ping KYB vendor. Cobalt, Middesk, Heron, Ocrolus, Baselayer, and others all advertise resilience features of some form. The differentiation buyers should test for during POC is not whether the vendor has the features but how the vendor handles the edge cases:

• Are circuit breakers scoped per state source or globally?

• Is the idempotency-key retention window long enough to cover a realistic retry cap?

• Can the integrator query the dead-letter queue, or is it vendor-internal only?

• Is the graceful-failure policy documented per verification class?

• Is the runbook for state-source outages concrete (what the ops team actually does) or aspirational (what the marketing says)?

Ask these in the POC, not after the contract. A vendor that cannot answer any of them in an hour of technical conversation is telling you something about the architecture behind the marketing.

Business-Consequence Monitoring: The Six Metrics That Matter

The metrics for pipeline resilience are distinct from verification-quality metrics. Six translate directly to business impact.

• Retry rate per state. Baseline it; alert on sustained deviation. A rising rate on California is a leading indicator of an outage that will hit deal flow within hours.

• Retry success rate. High-and-succeeding = bouncy but recovering source. High-and-failing = time to trip the breaker.

• Circuit-breaker trip count per state. A rising trip count on Oregon is the signal to update SLA expectations with downstream tenants or adjust portfolio-mix pricing.

• Time-in-open-state per breaker. How long each breaker stays open between trip and recovery. Longer open states extend the pipeline-perceived outage and the ops-team alert fatigue.

• Dead-letter queue growth rate. Growing queue = verifications silently disappearing. At volume, even single-digit daily dead-letters translate to measurable deal-flow loss.

• Idempotency-key hit rate. High rate = aggressive retry behavior upstream. Diagnose the upstream before vendor overage charges hit the invoice.

Alert thresholds tuned to baselines, not absolutes. A callback success rate of 92 percent might be fine for one shop and a crisis for another. Bake in a two-week baselining period after each deployment, then alert on deviations.

.png)