What the New Rules Require

The regulations formalize a two-step burden-shifting framework for disparate impact claims in lending:[1]

Step 1: A claimant demonstrates that a facially neutral policy or practice produces a disproportionately negative effect on a protected class. Under the LAD, those classes are broader than federal law, covering creed, ancestry, civil union status, domestic partnership status, pregnancy, gender identity and expression, sexual orientation, disability, and armed forces service status.[2]

Step 2: The lender must prove that the challenged practice furthers a legitimate, bona fide interest, and that no less discriminatory alternative exists.

The critical shift: the Division deliberately removed the phrase "equally effective" from the alternative-practice standard.[3] A lender may now be liable even when a less discriminatory alternative would require "somewhat more labor, time, and resources" or would be "less efficient or more costly" than the current practice. In plain terms, "It costs more to do it the fair way" is no longer a defense.

The Division also flagged blanket credit-score screens for heightened scrutiny.[4] If decisions based solely on credit scores produce disparate impact, the lender cannot argue that individual analysis would be more labor-intensive. The burden is on the lender to prove no viable, less discriminatory alternative exists.

The AI Angle: Automated Decisioning Under the Microscope

The regulations go further than any prior state-level framework on algorithmic accountability.[5] The Division requires covered entities to "take reasonable steps to carefully consider and evaluate the design and testing of automated decision-making tools." That language applies to all LAD contexts, not just employment.

For alternative lenders and MCA providers running automated underwriting, three implications stand out:

- Vendor liability does not transfer. If your scoring model or decisioning API produces disparate impact, you bear the liability. Relying on a vendor's assurance that its model is "fair" is not a defense.[6]

- Pre-deployment testing matters. Tools that have not undergone adequate testing to demonstrate they do not adversely affect protected classes may violate the LAD before a single decision is made.

- Opacity is a risk factor. Black-box models that cannot explain why an applicant was denied make defending against a disparate impact claim significantly harder.

The direction is clear: if you deploy it, you own its outcomes.

What Alternative Lenders Must Do Now

Compliance with New Jersey's disparate impact rules is not a future obligation. The rules took effect December 15, 2025.[7] Here is what risk and compliance teams should prioritize:

1. Audit your blanket screens. Identify every automatic denial trigger: minimum credit scores, time-in-business cutoffs, geographic exclusions, industry blacklists. For each, ask whether the same risk-management objective can be achieved with a less exclusionary approach.

2. Document your model governance. For every automated tool in your decisioning stack, maintain records of what it evaluates, how it was trained, what adverse impact testing was performed, and what alternatives were considered.

3. Pressure-test your vendors. Request adverse impact testing results from every third-party scoring or decisioning provider. If a vendor cannot document bias testing, that gap is now your regulatory exposure.

4. Establish ongoing monitoring. A model that was fair at deployment can drift. Build periodic disparate impact analysis into your compliance calendar as a recurring process, not a one-time exercise.

5. Prepare for the alternative-practice challenge. Claimants can prevail by identifying an alternative that achieves the same business objective with less discriminatory effect, even if it costs more. Your defense must address why no such alternative is viable.

The Objective Data Advantage

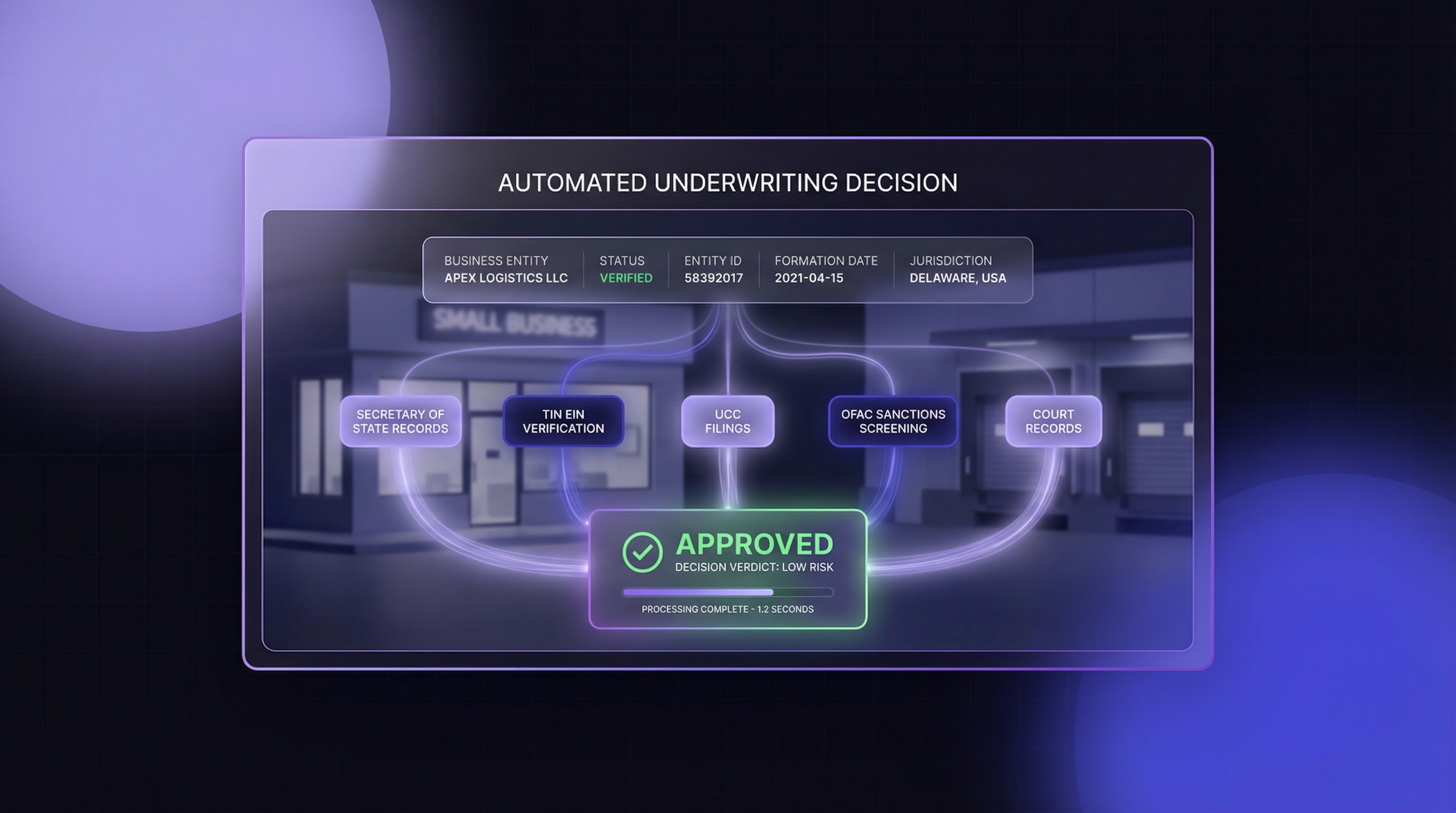

One of the most defensible strategies for reducing disparate impact risk in automated underwriting is anchoring decisions to objective, verifiable data points rather than opaque scores or subjective assessments.

Government-sourced business verification data, such as Secretary of State records, provides a factual, bias-free input: Is the entity active? When was it formed? Who are the officers on file? These are publicly available facts with no embedded demographic signal. Incorporating primary-source verification data into underwriting models strengthens both accuracy and defensibility, giving lenders a concrete answer when regulators ask how they mitigate disparate impact.

Federal vs. State: The Regulatory Divergence

These rules did not emerge in a vacuum. In early 2025, the Trump administration instructed federal agencies to "eliminate the use of disparate impact to the maximum degree possible."[8] The EEOC stepped back from pursuing disparate impact theories. HUD signaled plans to amend its own standard.

New Jersey went the other direction. Attorney General Matthew Platkin described the LAD as the "oldest and strongest state civil rights law in the country."[2] The Division stated its regulations strive to "meet or exceed the floor set by HUD in enforcing the Fair Housing Act."

For multi-state lenders, the message is straightforward: federal rollbacks do not preempt New Jersey's rules. A practice that satisfies a relaxed federal standard may still violate state law. And New Jersey is unlikely to be alone. Colorado, Illinois, and California have all advanced AI governance frameworks that intersect with lending.[9]

Build your compliance program to the highest standard you will face, not the lowest. If your underwriting model can survive New Jersey's scrutiny, it can survive anywhere.

Cobalt Intelligence provides real-time business verification data from all 50 Secretary of State databases through a single API. For more on how objective, government-sourced data supports defensible underwriting decisions, schedule a conversation.

.png)

.png)