Why Platform-Scale KYB Is Architecturally Different

A single-tenant lender runs verification on its own applicant pool and owns the risk decision end-to-end. A platform runs verification on behalf of N downstream customers, each with their own applicant pool, their own risk framework, their own compliance posture. The architectural assumptions that work for the single-tenant case fall over at the platform layer.

The fan-out problem

Stripe Connect, one of the most visible platform KYB products, describes a similar pattern: Stripe handles KYC and KYB for the platform's connected accounts, letting the platform's sellers get verified faster.[1] The architectural shift is from one lender verifying one pool to one platform verifying many pools through the same pipeline. Every capacity assumption, every rate-limit budget, every monitoring threshold has to handle the aggregate load across all downstream tenants simultaneously.

The compliance-boundary problem

When a lender verifies a business, the lender owns the decision and the audit trail. When a platform verifies a business on behalf of a downstream customer, the responsibility split is more complex. Some verification artifacts belong to the platform (the state-registry query timestamp, the vendor response log). Some belong to the downstream customer (the underwriting decision, the final accept / decline). Enigma's 2025 KYB-for-marketplaces framework explicitly describes this as a shared-responsibility model: the platform handles verification infrastructure, the downstream customer handles decisioning and documentation.[2] The framing matters because ambiguity in where the split sits produces compliance gaps that neither party notices until an examiner asks.

The coverage-quality pressure

A single-tenant lender can accept that its KYB vendor is weak on Delaware name changes because Delaware is a manageable portion of the applicant pool. A platform serving downstream customers with different portfolio mixes (one heavy Delaware, one heavy Texas, one heavy California) cannot. The platform's coverage weakness in any state produces a bad experience for the downstream customer with the weakest-state concentration. The platform's minimum coverage quality has to be high across all states, not just the ones concentrated in the aggregate volume.

When cached-first only is the right architecture

Not every platform needs live-ping. A platform whose tenant base is concentrated in established-business verification (entities more than 24 months old, stable operational history, low-fraud-risk industries like B2B SaaS or enterprise procurement) may find that a cache-only architecture with weekly or monthly refresh is cost-efficient enough that live-ping is architectural overkill. The decision depends on tenant-mix concentration: if the applicant pool is weighted heavily toward newly-formed entities, multi-state operators, or regulated verticals, live-ping becomes load-bearing. If the pool is weighted toward mature, stable businesses with low freshness sensitivity, cached data with periodic refresh is defensible. The question is not "which architecture is correct" but "which architecture fits my tenant mix at what cost."

What Breaks First When Volume Goes 10x, 100x, 1000x

The failure modes at 10x are not the same as at 100x or 1000x. Each scale introduces new pressure points.

10x: The synchronous integration stops working

At 1x single-tenant scale, synchronous integration with a verification vendor often works. State-response latency affects a fraction of volume, and the onboarding UX can absorb occasional 30-second waits. At 10x, the synchronous path starts cascading. Connection pools exhaust. Timeout rates climb. The integration has to shift to async request-reply. This is typically the first architectural pivot platforms make.

100x: The rate-limit budget becomes a cross-tenant coordination problem

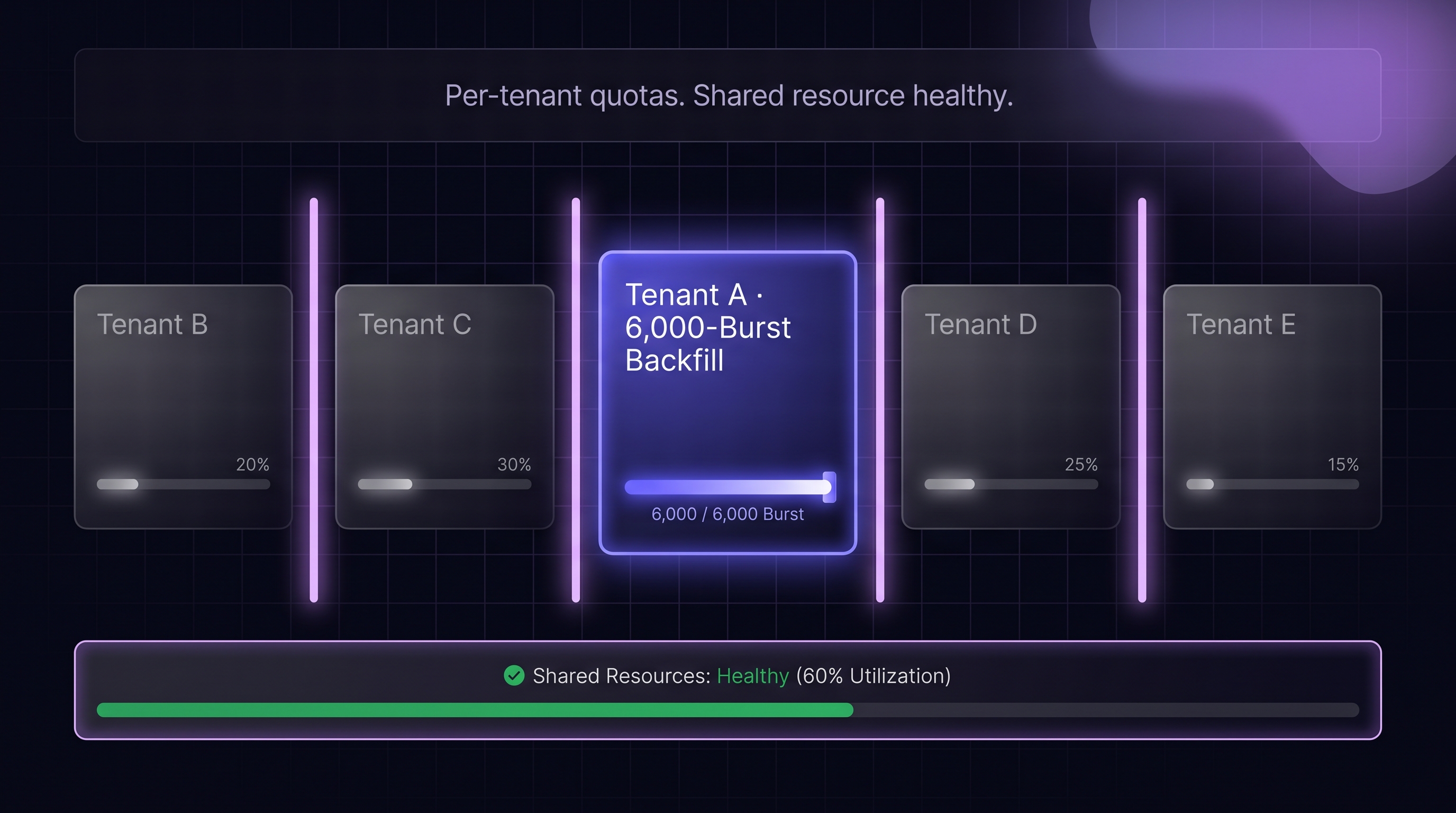

At 100x scale, the platform is pushing enough traffic against the upstream verification vendor that rate-limit budgets become a coordinated resource. One noisy downstream customer can exhaust the platform's entire budget in a burst, degrading verification for every other tenant. The platform has to implement tenant-aware rate shaping: per-customer quotas, fair-share queuing, burst absorption, and explicit throttling when a customer exceeds its allocation.

Concrete failure example (anonymized). A platform processing 40,000 verifications per month hit rate-limit exhaustion when a single fintech tenant ran a 6,000-applicant backfill on a Tuesday morning. The backfill consumed the platform's daily upstream-vendor quota in under 2 hours, degrading verification latency across the entire tenant base for roughly 3 hours until the platform could negotiate an emergency quota raise with the vendor. The remediation cost was not just the engineering incident response; it was the confidence hit from several unrelated tenants whose onboarding was degraded through no action of their own. The lesson: rate-limit policy has to anticipate tenant-level bursts, not just aggregate steady-state volume. The fix was a per-tenant daily quota with burst allowance capped at 20 percent of platform-wide daily budget, plus throttling when a tenant exceeded its ceiling, plus a pre-notification API for tenants planning backfills so the platform could pre-provision capacity.

1000x: Data pipelines and observability become the bottleneck

At 1000x scale, the verification logs alone become a significant data-engineering problem. Every verification produces a response payload, a component-signal breakdown, a timestamp, a correlation ID, and downstream forwarding. At sustained high volume, the logging infrastructure, the observability stack, and the downstream data-forwarding pipeline all become capacity-constrained in ways that do not show up at smaller scale. The platform has to treat its verification-log pipeline as a first-class engineering concern, not a side-effect of the verification calls themselves.

The hidden cost: policy drift across tenants

As downstream-customer count grows, each customer evolves its verification policy at its own pace. Some tighten thresholds after a fraud incident; some loosen them after a manual-review bottleneck. The platform's default policy diverges from the actual blend across tenants. Without explicit per-tenant policy versioning, the platform cannot accurately describe "what verification we run" for any given customer at any given time.

The Multi-Tenant Verification Architecture

A production platform-KYB architecture has several distinct layers that are optional at single-tenant scale.

The tenant-aware ingress layer

Every incoming verification request carries a tenant identifier. The ingress layer maps the tenant to its policy (freshness thresholds, confidence-score tiers, per-state routing, pass-through fields). Two different tenants running the same applicant through the platform can get different verification responses because their policies differ.

The per-tenant rate-limit and quota layer

The platform's aggregate rate-limit budget (against the upstream vendor, against state sources if the platform integrates direct) is divided across tenants. Per-tenant quotas prevent one customer from consuming the entire budget. Fair-share queuing ensures latency is evenly distributed. Burst-absorption buffers let a customer exceed its steady-state quota temporarily without breaking the aggregate contract.

The tenant-scoped audit trail

Every verification record is tagged with the tenant that requested it. Audit trails are queryable per tenant; a downstream customer's compliance team can pull the verifications that relate to their applicant pool without seeing other tenants' data. The logging infrastructure has to support this scoping natively.

The downstream-delivery layer

Verification results flow back to the requesting tenant through the platform's API or webhook. The pass-through format is defined by the platform's contract with each tenant: some want raw vendor response, some want normalized fields, some want a platform-decided pass / fail signal. The delivery layer translates from the platform's internal representation to the tenant-specific format.

Cost Allocation in a Multi-Tenant KYB Platform

The unit-economics of platform KYB depend on how the platform prices verifications and how it allocates costs across tenants.

The per-verification cost driver

Each verification against the upstream vendor has a cost. Live-ping calls cost more than cached; per-state paid queries (Delaware status at $15-20 per lookup passed through at cost, New Jersey status fees) add additional cost. Per-verification cost at platform scale typically ranges from roughly $0.25 to $1.50 depending on state mix, vendor tier, and whether live-ping is primary or fallback. Delaware-heavy traffic skews higher. A platform running 1 million verifications per month at an average $0.75 per verification is $750,000 per month in direct upstream-vendor cost; at $1.20 (Delaware-heavy mix plus live-ping fallback), the same volume is $1.2M per month. The sensitivity to tenant mix is real enough that state-mix-aware pricing becomes a commercial lever, not just an infrastructure concern.

Pass-through versus absorbed

Platforms typically choose between passing the per-verification cost through to the tenant (a pricing model where verifications are line items) or absorbing the cost and pricing by tier (a pricing model where the tenant pays a flat rate regardless of verification count). Each has implications: pass-through aligns incentives but creates billing complexity; absorbed simplifies billing but exposes the platform to margin compression when a tenant's verification volume exceeds modeled assumptions.

The usage-tier pattern

A common middle-ground is tiered usage: the tenant pays a flat rate up to a threshold, then pays per-verification above it. The tier boundary is set so that the tenant's typical volume is within the flat tier, with overage protecting the platform from heavy-volume outliers. The tier boundary has to be re-tuned as the platform's cost structure evolves.

The state-mix pricing question

If Delaware status calls cost more than California status calls (because of state-imposed fees), should the pricing reflect that? Most platforms flatten this into a single per-verification price because the complexity of exposing state-mix pricing to tenants is not worth the margin optimization. The platform absorbs the variance as part of its cost structure.

Per-Tenant Policy Management: The Configuration Problem

Each tenant running on the platform has its own policy. Managing those policies without drift or conflict is a nontrivial engineering problem.

The policy-as-configuration pattern

Policies live in structured configuration (JSON, YAML, a dedicated policy service) rather than hardcoded logic. Each policy has a version, an owner (the tenant's compliance lead or risk team), and a change-history log. Policy updates go through a review process, not a direct code push.

The policy-validation layer

Before a policy takes effect, a validation layer checks it against platform constraints: confidence thresholds within legal ranges, state coverage consistent with the tenant's contractual entitlement, pass-through fields within the set the platform exposes. Invalid policies are rejected before they hit production traffic.

The policy-versioning for audit

Every verification record captures the policy version that was in effect at the moment of the verification. An examiner later reviewing the decision can reconstruct exactly what rules applied. Without this, policy changes become invisible to audit, which is an examiner finding.

The tenant-default escape hatch

The platform provides a sensible default policy for tenants that do not customize. Small tenants use the default; large tenants customize. The default has to be conservative (accepts less fraud exposure) because permissive defaults expose the platform to tenant-level risk the platform cannot control.

The Shared-Responsibility Model for Compliance

Platform KYB sits in a specific compliance posture that is different from single-tenant.

What the platform owns

• Infrastructure compliance. SOC 2, data-residency, encryption at rest and in transit.

• Verification artifact production. Query timestamps, raw vendor responses, source-registry evidence, audit-ready logs.

• Vendor relationship. Contractual obligations to the upstream verification vendor, rate-limit management, SLA monitoring.

• Platform-level regulatory posture. Any regulations that apply to the platform as an entity (money transmitter licensing, data-broker status, state lender licensing where applicable).

What the downstream tenant owns

• Underwriting decision. The accept / decline logic applied to the verification result.

• Tenant-specific regulatory posture. AML / BSA, lender licensing, state-level compliance for the tenant's business.

• Audit trail of decisioning. The examiner-facing record of why each application was approved or declined.

• Customer communication. Applicant-facing messaging about verification outcomes.

The contract surface

The shared-responsibility model is codified in the contract between platform and tenant. The contract specifies what the platform produces, what the tenant owns, what happens in ambiguous cases. Ambiguity in this contract produces compliance gaps that neither party notices until an examiner asks.

Monitoring a Multi-Tenant Verification Platform

Platform-scale monitoring is distinct from single-tenant monitoring.

• Per-tenant verification volume. Trended by day / week / month, with alerting on unusual spikes or drops.

• Per-tenant latency distributions. Some tenants have state-mix weighted toward slow states; their latency profile differs from the aggregate.

• Per-tenant success and escalation rates. Shifts in a single tenant's escalation rate can signal either a policy-tuning problem or a shift in that tenant's applicant pool.

• Cross-tenant rate-limit utilization. How much of the aggregate budget each tenant consumes. Noisy-neighbor patterns surface here.

• Per-state latency and error rates. Same metrics as single-tenant but aggregated across the platform.

• Policy version distribution. How many tenants are on each policy version; rate of policy updates per tenant.

• Upstream-vendor SLA compliance. What the platform is receiving from its verification vendor relative to contracted SLA.

The Four Architecture Decisions That Matter Most

Before the next architecture review, the four decisions below are the ones that separate a platform that scales cleanly to 1000x from one that accumulates multi-tenant debt that becomes an existential burden at the threshold.

Tenant-aware rate-limit and quota enforcement with fair-share queuing. This is the lesson of the 40k-verifications incident above. Aggregate-level budgets without per-tenant isolation fail the first time a single tenant runs a predictable operational event like a backfill. The design choice is which tenant-isolation model to use (fixed quotas vs weighted fair-share vs token-bucket), not whether to have one.

Tenant-configurable policy with version capture in every verification record. A platform that cannot reconstruct "what rules applied to this verification on this date" at audit time loses the examiner conversation for every tenant simultaneously. Configuration-as-code with version history, validation layer, and in-record capture is the only defensible pattern at platform scale.

Shared-responsibility contract with explicit ownership boundaries. Ambiguity here is the most expensive kind of platform debt, because it only surfaces during a regulatory incident and by then the cost of retrofitting ownership boundaries is orders of magnitude higher than the cost of writing them cleanly up front.

Upstream-vendor failure runbook with graceful-failure policy per verification class. When the upstream vendor or a state source goes down, the platform's response is what every tenant sees. A documented runbook that distinguishes onboarding-urgent from portfolio-monitoring from regulatory-deadline verifications, and routes each to a different fallback, is what separates the platforms that survive an outage cleanly from the ones whose tenants churn after a single incident.

The remaining operational decisions (tenant-aware ingress, tenant-scoped audit queries, downstream delivery formatting, cost allocation, cross-tenant monitoring) are all necessary but derivative: they fall out of the four above once those are right. Get the four right, and the rest is a matter of engineering discipline. Get any of the four wrong, and the rest cannot compensate.

The Questions Your Downstream Tenants Will Ask You (Be Ready)

Your downstream customers evaluate your platform's KYB feature on the same dimensions they would evaluate a direct vendor. These are the questions they will ask during procurement and during their own examiner cycles. The platform that can answer them crisply wins the account. The platform that deflects loses to a competitor who has thought through the answers.

• What is your upstream verification data source, and is it live-ping or cached? Your data freshness cannot exceed the upstream vendor's; your tenants will want to know the ceiling.

• How do you isolate my rate-limit budget from other tenants? Tenants who have been burned by noisy-neighbor degradation will not accept a handwave on this.

• Can I customize the verification policy, including confidence thresholds, state-specific routing, and freshness requirements? Tenants with non-default applicant pools (Delaware-heavy, newly-formed-heavy) need flexibility you may not yet expose.

• Can I pull an audit trail scoped to my verifications only? A tenant's examiner will not accept "we have it, but it is commingled with other customers' data."

• What is your per-state latency distribution, and how does it handle slow states like Oregon? Tenants with heavy Oregon exposure will ask specifically.

• What happens when the upstream vendor or a state source is down? Tenants want to see your graceful-failure policy in writing before they take platform risk.

• What is the shared-responsibility split in writing? A tenant's general counsel will ask this; have the contract language ready.

.png)